Category: web

-

Firefox should become the best freaking Fediverse app

The always awesome and insightful Luis wrote a post 9 years ago about how the web browser should become an RSS reader. Super important set of ideas and right on. Meanwhile, Mozilla has announced their intent to join the Fediverse by standing up a Mastodon instance in early 2023. I think Mozilla should make Firefox…

-

Don’t let federation make the experience suck

I’m pessimistic about federated architectures for new end-user products, like Mastodon. But, I could be wrong. In fact, I’d love to be wrong on this one. So since Blaine fairly called me out for implying that the fediverse can’t be better than Twitter, I’m gonna try to help, at least at first by pushing on…

-

managing photos and videos

This holiday, I finally spent time digging into how I manage photos and videos. With 2 young kids and some remote family and friends, this requires a good bit of thinking and planning. I know I’m not the only one, so I figured documenting where I landed might be useful to others. I started with…

-

Power & Accountability

So there’s this hot new app called Secret. The app is really clever: it prompts you to share secrets, and it sends those secrets to your social circle. It doesn’t identify you directly to your friends. Instead, it tells readers that this secret was written by one of their friends without identifying which one. The…

-

getting web sites to adopt a new identity system

My team at Mozilla works on Persona, an easy and secure web login solution. Persona delivers to web sites and apps just the right information for a meaningful login: an email address of the user’s choice. Persona is one of Mozilla’s first forays “up the stack” into web services. Typically, at Mozilla, we improve the…

-

Firefox is the unlocked browser

Anil Dash is a man after my own heart in his latest post, The Case for User Agent Extremism. Please go read this awesome post: One of my favorite aspects of the infrastructure of the web is that the way we refer to web browsers in a technical context: User Agents. Divorced from its geeky…

-

the Web is the Platform, and the User is the User

Mid-2007, I wrote two blog posts — get over it, the web is the platform and the web is the platform [part 2] that turned out to be quite right on one front, and so incredibly wrong on another. Let’s start with where I was right: Apps will be written using HTML and JavaScript. […]…

-

connect on your terms

I want to talk about what we, the Identity Team at Mozilla, are working on. Mozilla makes Firefox, the 2nd most popular browser in the world, and the only major browser built by a non-profit. Mozilla’s mission is to build a better Web that answers to no one but you, the user. It’s hard to…

-

cookies don’t track people. people track people.

The news shows are in a tizzy: Google violated your privacy again [CBS, CNN] by circumventing Safari’s built-in tracking protection mechanism. It’s great to see a renewed public focus on privacy, but, in this case, I think this is the wrong problem to focus on and the wrong message to send. what happened exactly (Want…

-

a simpler, webbier approach to Web Intents (or Activities)

A few months ago, Mike Hanson and I started meeting with James, Paul, Greg, and others on the Google Chrome team. We had a common goal: how might web developers build applications that talk to each other in a way that the user, not the site, decides which application to use? For example, how might…

-

encryption is (mostly) not magic

A few months ago, Sony’s Playstation Network got hacked. Millions of accounts were breached, leaking physical addresses and passwords. Sony admitted that their data was “not encrypted.” Around the same time, researchers discovered that Dropbox stores user files “unencrypted.” Dozens (hundreds?) closed their accounts in protest. They’re my confidential files, they cried, why couldn’t you…

-

BrowserID and me

A few weeks ago, I became Tech Lead on Identity and User Data at Mozilla. This is an awesome and challenging responsibility, and I’ve been busy. When I took on this new responsibility, BrowserID was already well under way, so we were able to launch it in my second week on the project (early July).…

-

and the laws of physics changed

Google just introduced Google Plus, their take on social networking. Unsurprisingly, Arvind has one of the first great reviews of its most important feature, Circles. Google Circles effectively let you map all the complexities of real-world privacy into your online identity, and that’s simply awesome. You can think of Circles as the actual circles of…

-

Online Voting is Terrifying and Inevitable

Voting online for public office is a terrifying proposition to most security experts. The paths to subversion or failure are many: the server could get overwhelmed by attackers, preventing voting altogether the server could get hacked and the votes changed surreptitiously the users’ machines could get compromised by a virus, which would then flip votes…

-

grab the pitchforks!… again

I’m fascinated with how quickly people have reached for the pitchforks recently when the slightest whiff of a privacy/security violation occurs. Last week, a few interesting security tidbits came to light regarding Dropbox, the increasingly popular cloud-based file storage and synchronization service. There’s some interesting discussion of de-duplication techniques which might lead to Oracle attacks,…

-

intelligently designing trust

For the past week, every security expert’s been talking about Comodo-Gate. I find it fascinating: Comodo-Gate goes to the core of how we handle trust and how web architecture evolves. And in the end, this crisis provides a rare opportunity. warning signs Last year, Chris Soghoian and Sid Stamm published a paper, Certified Lies [PDF],…

-

degrees of trust: software vs. data hosts

Overjoyed by all the SSL goodness around me (Twitter offers SSL-only as an option, so does Facebook, Google offers 2-factor auth), I started dutifully upgrading my web browsing experience on Firefox, specifically installing the EFF Add-On that turns on HTTPS everywhere it can, in particular when using Google (it uses encrypted.google.com by default). I googled…

-

the difference between privacy and security

Facebook today rolled out new security features, both of which are awesome: SSL everywhere, and social re-authentication. True, SSL everywhere should probably be a default, even though I continue to believe that the cost is significantly underestimated by many privacy advocates. Regardless, this announcement is great news. The only nitpick I have, and I point…

-

Facebook, the Control Revolution, and the Failure of Applied Modern Cryptography

In the late 1990s and early 2000s, it was widely assumed by most tech writers and thinkers, myself included, that the Internet was a “Control Revolution” (to use the words of Andrew Shapiro, author of a book with that very title in 1999). The Internet was going to put people in control, to enable buyers…

-

an answer to John Gruber: Google dropping H.264 is good for everyone

Google just dropped support for H.264 in Chrome. John Gruber, among others, is not happy. Now, John Gruber is a very smart guy, but his Apple bias is too much even for me, and it’s preventing him from seeing what is fairly obvious. So, allow me to answer John’s questions, even though I have no…

-

privacy icons

Aza Raskin has posted alpha 1 of the proposed Mozilla Privacy Icons. I was at the Mozilla-sponsored get-together where this was first discussed, and I’m really happy to see this moving forward. A few quick thoughts: the least useful of the icons is the “used only for intended use.” I don’t think that icon can…

-

OK, let’s work to make SSL easier for everyone

So in the wake of the FireSheep situation, which I described yesterday, the tech world is filled with people talking past each other on one important topic: should we just switch everything over to SSL? As I stated yesterday, I don’t think that’s going to happen anytime soon. I would love to be wrong, because…

-

keep your hands off my session cookies

For years, security folks — myself included — have warned about the risk of personalized web sites such as Google, Facebook, Twitter, etc. being served over plain HTTP, as opposed to the more secure HTTPS, especially given the proliferation of open wifi networks. But warnings from security freaks rarely get people’s attention. A demonstration is…

-

Facebook can and should do more to proactively protect users

A few days ago, the Wall Street Journal revealed that Facebook apps were leaking user information to ad networks. Today, Facebook proposed a scheme to address this issue. This is good news, but I’m concerned that Facebook’s proposal doesn’t address the underlying issue fully. Facebook could be doing a lot more to protect its users,…

-

an unwarranted bashing of Twitter’s oAuth

Ryan Paul over at ArsTechnica claims a compromise of Twitter’s oAuth system, but fails to demonstrate such a compromise. It’s unfortunate, because some of his comments are indeed worthwhile, and there are a few interesting recommendations that Twitter should follow (hah, no pun intended). But what we have here is not a “compromise”, and the…

-

browser extensions = user freedom

The web browser has become the universal trusted client. That can be good: users can mostly rely on their browsers to isolate their banking site from the other web sites they visit. It can also be bad for users’ freedom: Facebook can encourage the world to add “Like” buttons everywhere, and suddenly users are being…

-

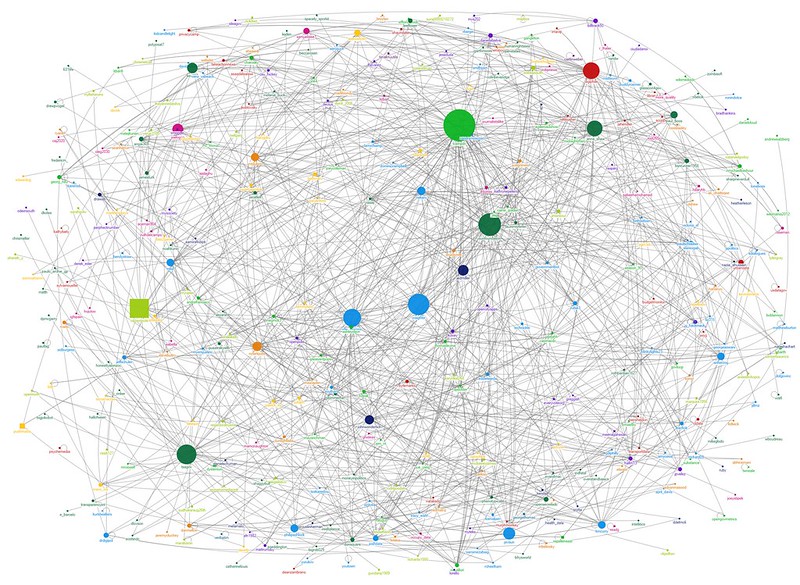

distributed innovation

A few years ago, a small group of folks (Mark Birbeck, Steven Pemberton, Ralph Swick, Shane McCarron, me, and more recently Ivan Herman, Manu Sporny, and a lot of great new folks) started with the simple idea that, if web pages contained a bit of structured data in addition to their haphazard content, we could…

-

The Great Content Lockdown of 2010

I had an invigorating and thought-provoking chat with my good friend Oliver Roup today. We agreed that the Apple iPad is going to be an unbelievable success. I’ve thought from day one that it would be huge, but I think it will be bigger than huge. Before the end of the summer, millions of people…

-

Protecting against web history sniffing attacks: an alternative

When a web site links to another web site, the link appears in a different color, usually a lighter shade of blue, if you’ve already visited the site. Unfortunately, this means that a malicious web site can learn what sites you visit by putting up a few links and checking to see how your browser…