Category: privacy

-

Affirmative sourcing of online media

We are well into the era of highly believable fake photos and videos. The major crises of disinformation that these fake photos will enable are still ahead of us, and they could be very damaging. Swift (or Mark Twain?) was right: a lie travels halfway around the world while the truth is putting on its…

-

the responsibility we have as software engineers

I had the chance to chat this week with the very awesome Kate Heddleston who mentioned that she’s been thinking a lot about the ethics of being a software engineer, something she just spoke about at PyCon Sweden. It brought me back to a post I wrote a few years ago, where I said: There’s this…

-

Power & Accountability

So there’s this hot new app called Secret. The app is really clever: it prompts you to share secrets, and it sends those secrets to your social circle. It doesn’t identify you directly to your friends. Instead, it tells readers that this secret was written by one of their friends without identifying which one. The…

-

Letter to President Obama on Surveillance and Freedom

Dear President Obama, My name is Ben Adida. I am 36, married, two kids, working in Silicon Valley as a software engineer with a strong background in security. I’ve worked on the security of voting systems and health systems, on web browsers and payment systems. I enthusiastically voted for you three times: in the 2008…

-

a hopeful note about PRISM

You know what? I’m feeling optimistic suddenly. Mere hours ago, all of us tech/policy geeks lost our marbles over PRISM. And in the last hour, we’ve got two of the most strongly worded surveillance rebuttals I’ve ever seen from major Internet Companies. Here’s Google’s CEO Larry Page: we provide user data to governments only in…

-

what happens when we forget who should own the data: PRISM

Heard about PRISM? Supposedly, the NSA has direct access to servers at major Internet companies. This has happened before, e.g. when Sprint provided law enforcement a simple data portal they could use at any time. They used it 8 million times in a year. That said, the scale of this new claim is a bit…

-

Firefox is the unlocked browser

Anil Dash is a man after my own heart in his latest post, The Case for User Agent Extremism. Please go read this awesome post: One of my favorite aspects of the infrastructure of the web is that the way we refer to web browsers in a technical context: User Agents. Divorced from its geeky…

-

connect on your terms

I want to talk about what we, the Identity Team at Mozilla, are working on. Mozilla makes Firefox, the 2nd most popular browser in the world, and the only major browser built by a non-profit. Mozilla’s mission is to build a better Web that answers to no one but you, the user. It’s hard to…

-

cookies don’t track people. people track people.

The news shows are in a tizzy: Google violated your privacy again [CBS, CNN] by circumventing Safari’s built-in tracking protection mechanism. It’s great to see a renewed public focus on privacy, but, in this case, I think this is the wrong problem to focus on and the wrong message to send. what happened exactly (Want…

-

encryption is (mostly) not magic

A few months ago, Sony’s Playstation Network got hacked. Millions of accounts were breached, leaking physical addresses and passwords. Sony admitted that their data was “not encrypted.” Around the same time, researchers discovered that Dropbox stores user files “unencrypted.” Dozens (hundreds?) closed their accounts in protest. They’re my confidential files, they cried, why couldn’t you…

-

and the laws of physics changed

Google just introduced Google Plus, their take on social networking. Unsurprisingly, Arvind has one of the first great reviews of its most important feature, Circles. Google Circles effectively let you map all the complexities of real-world privacy into your online identity, and that’s simply awesome. You can think of Circles as the actual circles of…

-

with great power…

When Arvind writes something, I tend to wait until I have a quiet moment to read it, because it usually packs a particularly high signal to noise ratio. His latest post In Silicon Valley, Great Power but No Responsibility, is awesome: We’re at a unique time in history in terms of technologists having so much…

-

(your) information wants to be free

A couple of weeks ago, Epsilon, an email marketing firm, was breached. If you are a customer of Tivo, Best Buy, Target, The College Board, Walgreens, etc., that means your name and email address were accessed by some attacker. You probably received a warning to watch out for phishing attacks (assuming it wasn’t caught in…

-

grab the pitchforks!… again

I’m fascinated with how quickly people have reached for the pitchforks recently when the slightest whiff of a privacy/security violation occurs. Last week, a few interesting security tidbits came to light regarding Dropbox, the increasingly popular cloud-based file storage and synchronization service. There’s some interesting discussion of de-duplication techniques which might lead to Oracle attacks,…

-

degrees of trust: software vs. data hosts

Overjoyed by all the SSL goodness around me (Twitter offers SSL-only as an option, so does Facebook, Google offers 2-factor auth), I started dutifully upgrading my web browsing experience on Firefox, specifically installing the EFF Add-On that turns on HTTPS everywhere it can, in particular when using Google (it uses encrypted.google.com by default). I googled…

-

the difference between privacy and security

Facebook today rolled out new security features, both of which are awesome: SSL everywhere, and social re-authentication. True, SSL everywhere should probably be a default, even though I continue to believe that the cost is significantly underestimated by many privacy advocates. Regardless, this announcement is great news. The only nitpick I have, and I point…

-

Facebook, the Control Revolution, and the Failure of Applied Modern Cryptography

In the late 1990s and early 2000s, it was widely assumed by most tech writers and thinkers, myself included, that the Internet was a “Control Revolution” (to use the words of Andrew Shapiro, author of a book with that very title in 1999). The Internet was going to put people in control, to enable buyers…

-

privacy icons

Aza Raskin has posted alpha 1 of the proposed Mozilla Privacy Icons. I was at the Mozilla-sponsored get-together where this was first discussed, and I’m really happy to see this moving forward. A few quick thoughts: the least useful of the icons is the “used only for intended use.” I don’t think that icon can…

-

Crisis in the Java Community… could they have used a secret-ballot election?

There is a bit of a crisis in the Java community: the Apache Foundation just resigned its seat on the Java Executive Committee, as did two individual members, Doug Lea and Tim Peierls. From what I understand, the central issue appears to be that Oracle, the new Java “owner” since they acquired Sun Microsystems, is…

-

The Health IT report is very good; some opinionated suggestions

“Oy,” I thought, when I received a copy of “REPORT TO THE PRESIDENT REALIZING THE FULL POTENTIAL OF HEALTH INFORMATION TECHNOLOGY TO IMPROVE HEALTHCARE FOR AMERICANS: THE PATH FORWARD” [PDF]. I worried this would be a lot of vague, easy-to-agree-with advice with little actionable material. I was wrong. Hats off to the team that wrote…

-

airport privacy

Today, I opted out of the TSA’s “advanced imaging” system at San Francisco International airport. To the TSA’s credit, they behaved very professionally. As soon as I said I was opting out, a manager came over and asked me why, wrote down my reason, and very politely directed me to a patdown. The TSA agent…

-

Facebook can and should do more to proactively protect users

A few days ago, the Wall Street Journal revealed that Facebook apps were leaking user information to ad networks. Today, Facebook proposed a scheme to address this issue. This is good news, but I’m concerned that Facebook’s proposal doesn’t address the underlying issue fully. Facebook could be doing a lot more to protect its users,…

-

browser extensions = user freedom

The web browser has become the universal trusted client. That can be good: users can mostly rely on their browsers to isolate their banking site from the other web sites they visit. It can also be bad for users’ freedom: Facebook can encourage the world to add “Like” buttons everywhere, and suddenly users are being…

-

devices, payload data, and why Kim is (in part) right.

A few days ago, I wrote about privacy advocacy theater and lamented how some folks, including EPIC and Kim Cameron, are attacking Google in a needlessly harsh way for what was an accidental collection of data. Kim Cameron responded, and he is right to point out that my argument, in the Google case, missed an…

-

Privacy Advocacy Theater

Ed Felten recently used the very nice term Privacy Theater in describing the insanity of 6,000-word privacy agreements that we pretend to understand. The term, inspired by Bruce Schneier’s “security theater” description of US airport security, may have been introduced by Rohit Khare in December 2009 on TechCrunch, where he described how “social networks only…

-

For deniability, faking data even the owner can’t prove is fake

I was speaking with a colleague yesterday about Loopt, the location-based social network, the rise of location-based services and the incredible privacy challenges they present. I heard the Loopt folks give a talk a few months ago, and I was generally impressed with the measures they’re taking to protect their users’ data. I particularly enjoyed…

-

Buzz Kill

Everyone is talking about the privacy disaster that was the Google Buzz launch, and oh my goodness it was. I’ve never been so thankful that I don’t use gmail. I’m frankly surprised that they didn’t do a smaller beta first, or that there isn’t a group at Google charged with thinking about the privacy implications…

-

One real issue behind the Mint.com sale to Intuit: who owns the data?

A few days ago, mint.com, a fantastic online personal finance tool, was sold to Intuit. A number of users are disappointed, and some are downright pissed, claiming the “next generation bends over.” Well, first of all, that’s ridiculous, a company sells when it wants to sell, and there are may ways to change the world,…

-

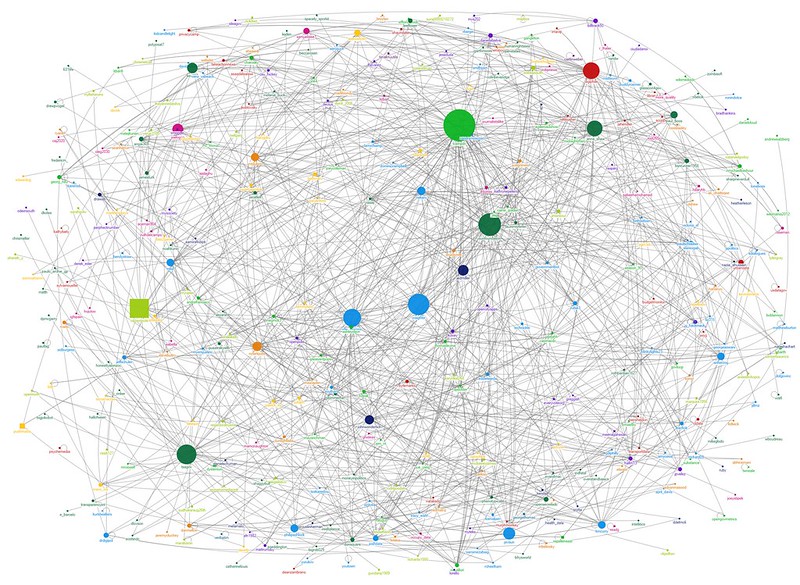

A Partial Report from Social Network Security 2009 @ Stanford

On Friday, I attended Social Network Security 2009 at Stanford. This was a fantastic get-together, with some very interesting info from Facebook, Google, Yahoo, Loopt, and the research front. I have some notes, mostly from the first half of the day, at which point my laptop battery ran out. Time to upgrade to the 7-hour…